Wrapping up another round of UX testing

Thanks again to Sonya Betz of the University of Alberta Libraries for leading another valuable round of UX testing for OJS 3 — and of course to all of our volunteer testers for taking the time to help us improve our software for everyone.

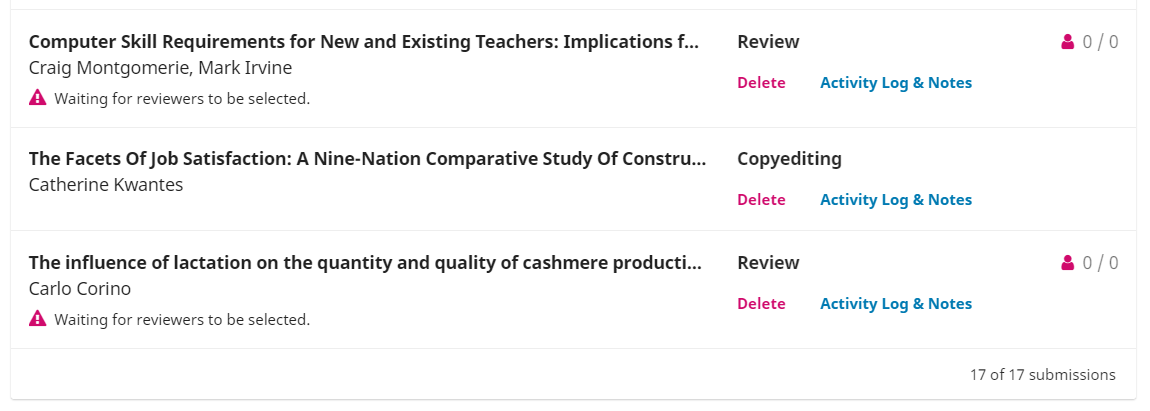

In this latest round, we focused on some new developments to improve the list of submissions the editors see when they login to the system.

We learned that we definitely got some things right, but still need to review some others.

Overall, testers liked the new approach to the submission list, including the addition of notification messages (e.g., “Waiting for Reviewers to be selected”) to help them see at a glance what actions are required.

Our first attempt at some iconography was less successful however, with testers having trouble understanding the meaning of some of the icons, colours, and numbering. We also heard that sorting and/or filtering of submission lists is critical, especially for larger journals with potentially long submission lists. Removing submission identification numbers was also controversial — removing them provides a cleaner interface (which is good!), but some editors rely on those numbers heavily to track their work (which can be bad).

Here’s a list of some of the new git issues generated from this round of testing:

- Add filtering options to new submissions list (#2612)

- Add contextual information to icons in new submission list (#2613)

- Deleting from submission list is too prominent and rarely used (#2615)

- Add counts of submissions to submission tabs and list filters (#2617)

We also discovered some issues beyond the focus on submission lists, and are working to improve these as well:

- Color of tasks panel is confusing (#2576)

- Change “Add” to “Assign” in participants grid (#2616)

- Confusion about two-step reviewer assignment process (#2614)

If any of them are of particular interest, you can “watch” those issues to receive updates.

Usability testing is now a standard part of our development cycle, so you’ll be hearing more about these outcomes in the future.

If you’d like to volunteer to do some testing with us (it takes about an hour and is done remotely), let us know.